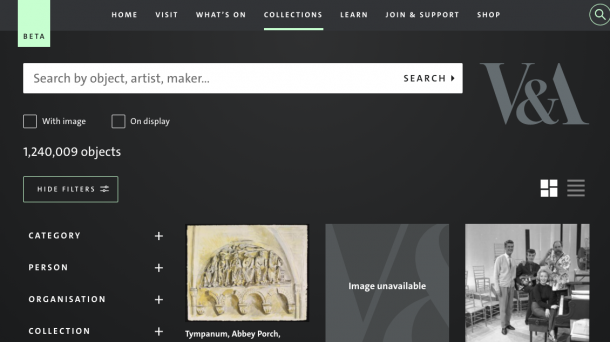

If you’re reading this blog post, hopefully you’ll have seen (and been enjoying using) Explore The Collections (ETC). I’ve been a part of the development team for ETC for over a year and not only am I proud of what we have released, I’m also pleased to see its positive reception. ETC is a huge step forward from our previous collections’ portal Search The Collections (STC) and this initial beta release is only the beginning. Development and design are iterative processes and there is always a way to build and improve on a product. Even in its current form, however, ETC represents a big step in making the collections more accessible and available online.

Determining the tech stack

STC was the online portal for the V&A collections for 11 years. During that time, web technology and design standards have made great strides to take advantage of the mass proliferation of more powerful and portable devices. Websites have become more dynamic and can be comprised almost entirely of Javascript. Static pages – already-built web pages that are stored on servers and delivered to the user on request – have been eclipsed by dynamic pages. It is common for pages to consist of a paltry amount of HTML that will be replaced by Javascript generated elements. So, after surveying the dynamically-generated landscape, we decided that the new site should use state-of-the-art, cutting-edge … static pages.

The case for static pages in a dynamically generated web

It makes sense to use static pages to display information about our objects because most of the information, such as origin, maker, creation date etc., about each object remains static. Updates to object records do happen, but the changed objects are such a small subset of the collection that implementing simultaneous updates for ETC would yield little benefit. Scheduled, nightly updates remove the burden on the servers to generate pages on demand. Furthermore using a minimal amount of Javascript increases browser compatibility thus making the collections usable on as many devices as possible.

A unique challenge we faced when developing ETC, was the sheer size of the collections. While I’m writing this post, an empty search of STC will return a results count of 1,240,009 objects. This immense number of records results in a wide spread of potential requests. As a result, a caching system – where a subset of the most recently accessed pages are stored for subsequent requests to avoid more expensive calls to the server – would be mostly ineffectual. By using static instead of dynamically generated pages, each object would be quick to load no matter which of the 3,506 results for “spoon” you wished to view.

Now we faced our next problem. How do we generate 1,240,009 pages?

Generating 1,240,009 pages with Hugo

Hugo is an open-source static site generator. It takes in content (consisting of markdown documents) and templates (using Go as a templating language) and outputs a static website. We were initially drawn to Hugo because of it’s claimed speed and Victor-Hugo, an easy-to-setup bootstrap application that integrated Hugo into a Webpack application (which can transpile our code, generating cross-browser compatible JS and CSS).

Constructing components

Before we could generate the pages, we needed to build the templates. We approached this task utilising the Component Driven Development (CDD) paradigm. CDD is an approach to web development which involves breaking down a page into smaller components which are developed in isolation. These smaller components are used to construct groups of elements which will be used to build the entire page. CDD can be thought of as a subset of atomic design.

A standard practice when developing with CDD in mind, is to use a component library to contain the separate elements. Component libraries serve multiple purposes: providing a consistent development and test environment for the components; keeping track of what has already been developed and could be incorporated in subsequent components; and easy integration of the components in any new project.

Fortunately, we already have our own Fractal component library. Previously we used it to house the elements and styles of the main V&A website. As ETC was to be more integrated into the main site than STC, this also meant we were able to easily incorporate the already-existing elements, such as buttons, colours and headers, that were to be consistent in style.

After we had built the components, it was a simple matter of installing our Fractal component library as a package to our Victor-Hugo project. We could then build the pages using the components we had created.

The ETC build process

I then wrote a Python script that could automate the build process. To start, we build the JS and CSS for the pages using Victor-Hugo. This also creates a configuration file containing the paths of assets for Hugo to reference when it builds the pages. At this stage, we can also add other information to the Hugo configuration file that can be referenced during page generation.

Next, we build the pages. The object data dump comes in the form of compressed .jsonl files, each containing 10,000 records. An object record is first converted to a single json file. At this point in the process we add extra information to the record, such as links to content on the main site related to the object, that can be displayed on the page.

Following this the object is saved as a markdown file with the record information stored in the page front matter in json format. We do this for each of the 10,000 objects and then run Hugo. When completed, the 10,000 pages are moved to the correct location on the server and then those content files are deleted for the next 10,000 to be generated. This carries on until all the pages are generated.

Results

Considering the amount of pages that need to be generated, speed was an important factor when determining what static site generator to use. Hugo did not disappoint in this area and lived up to its claim of being the “world’s fastest framework for building websites”. It manages to generate 10,000 pages in around 30 seconds. So in total it generally takes an hour to generate the entirety of ETC, which is remarkable for over one million pages! This is a task that will only need to be done in exceptional circumstances, as more commonly a small subset of pages will need to be regenerated.

Takeaways

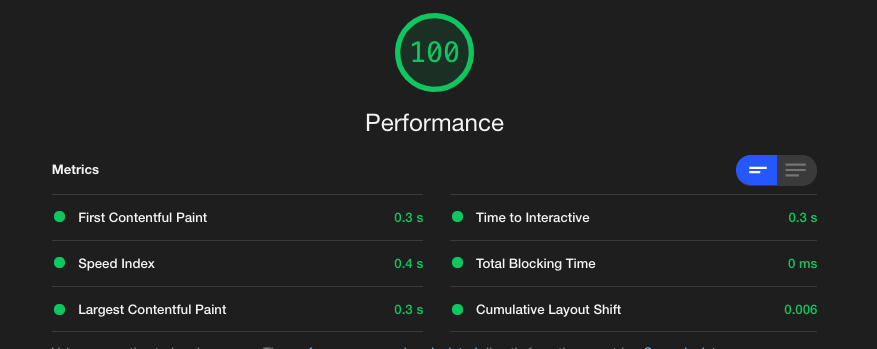

Performance

Hugo’s speed would have been irrelevant if the resulting site was sluggish but by only using ETC, the performance benefits of static pages are immediately apparent. Click on any object in search results and the page will load almost instantaneously. When running a Google Lighthouse performance test on a random page, it scores a 100 for performance.

A dynamic page would have fared worse and have taken more time, having to firstly request a ‘holding page’, then contact the API and receive back the object record before finally generating the page.

Lessons learnt

The irony of using actual, HTML-based, old-school web pages and touting it’s performance benefits over cutting-edge Javascript-based front-end technology is not lost on me. Perhaps a shallow comparison can be drawn between the trends of web development and fashion – “what’s old is new again”. In web development a familiar trend appears: wide adoption of a new abstraction utilising a new framework or library (perhaps without consideration how of suited that abstraction might be for individual projects), realising that abstraction has an impact on performance, then pairing back that abstraction until we are left with the original.

Obviously, while static pages themselves are not new, static page generators like Hugo are. This isn’t the first time we have used Hugo and it won’t be the last. For our Developer’s Site we used Hugo’s markdown processing features, to create a static site that’s easy to add content to locally using a text editor or online using a tool like HackMD.

Personally, working on ETC has made me consider the following when working on prospective projects: do I really need to use a framework for this? Are there features native to browsers I can instead use effectively in my project? Can I accomplish what I want more easily and with less set-up using native Javascript? Sometimes, in a bid to break new ground and learn exciting new systems, we might be overcomplicating things.