Since the 1960s, the development of digital technologies including computers, plotter printers and videogames consoles, as well as later networked technologies such as smartphones and the internet, have transformed the way art and design are produced, disseminated and consumed, and by whom.

Artists and designers have always been at the forefront in exploring the creative potential of emerging technologies. This glossary maps out key terms in the field of digital art and design that relate to the V&A's collections.

3D printing

3D printing refers to the construction of a physical object from a digital model. In the printing process, material is deposited layer-by-layer by the print head. Materials used for this additive process include thermoplastic filaments (see Charlotte Valve and Snorkel Mask), metal, ceramics (see Vase by Ahn Seong Man), sandstone (see Radical Love by Heather Dewey-Hagborg) and titanium (see Pinarello Bolide HR Handlebar).

Hobbyist 3D printing communities often use open-source file sharing to distribute digital models that can be changed and modified to suit new needs. The first open-source 3D printer designed for hobbyists and maker spaces was the Makerbot Thing-O-Matic.

See also: Open-source

Algorithm

An algorithm is a set of instructions to complete a task – this could be as simple as following a cooking recipe to the process of creating a complex digital artwork. In computation, an algorithm can be used to solve a problem, perform calculations, process data and undertake automated reasoning.

In early computer art, algorithms were used to generate images from repeating sets of instructions. Examples of algorithmic processes can be seen in early computer works by Vera Molnar, Frieder Nake and Manfred Mohr.

Algorithms imitate logical deduction, making them valuable tools for automated data processing with many applications in artificial intelligence. Everyday uses include web search, streaming platforms, online dating, and voice assistants.

See also: Generative art; Artificial intelligence

Artificial intelligence

Artificial intelligence (AI), refers to automated thinking processes and decisions made by computers. Data is used to teach machines how to learn, problem solve and read information. Today, AI is used across different industries including finance, healthcare, agriculture, autonomous vehicles, robotics, facial recognition, image tagging and natural language processing.

AI can be classed as weak or strong. Weak AI embodies a system designed to carry out one particular job, for example the video and image capturing Google Glass. Strong AI remains a theoretical form of machine intelligence that simulates, or gives the impression of, human intelligence. Both weak and strong AI are associated with contentious issues including algorithmic bias, data privacy, and the automation of work.

See also: Machine learning

Augmented reality

Augmented reality (AR) refers to a set of technologies used to enhance the physical world, visually superimposing images and digital content to inhabit a physical space, often via a screen interface. This interface takes the form of smart glasses, head-mounted display devices (HMD), and smartphones.

The first true immersive AR system was developed in 1992 with Louis Rosenberg's Virtual Fixtures system, however it has its roots in 1960s military flight applications, where displays overlayed information directly onto a pilot's visual field removing the need to look away when in flight. Today, AR has applications in manufacturing, medicine, e-commerce, digital art, social media and gaming.

See also: Virtual reality

Computer art

Computer art is art made using a computer as a tool or medium. The term is distinct from that of digital and new media art, instead indicating work made between 1950 – 90 that focuses on algorithmically generated forms created using bespoke software. Pioneering computer art originated from collaborations between creatives and scientists, mathematicians, programmers and engineers who gained access to computing equipment through collaborations with laboratories, universities and corporations, such as Bell Labs.

The V&A holds one of the world's largest computer art collections including The Computer Arts Society collection, and the Patric Prince archive, and prints from the pioneering 1968 ICA show Cybernetic Serendipity.

See also: Algorithm

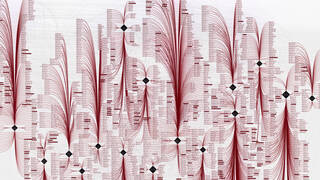

Data visualisation

Data visualisation is the representation of information and complex data sets in the form of charts and tables, infographics, real-time animation and other graphical methods.

Data visualisation has its roots in statistics, but in the early 21st century, it is an essential tool for analysing large amounts of information. As a practice, it is increasingly understood as a mode of design and storytelling, and its uses go beyond data-driven decision making. It plays an important role in economics, science, healthcare, political communications and journalism. In addition, data visualisation is increasingly used as a tool for digital activism on social media sites, such as Instagram and Twitter.

Digital art

Digital art comprises a variety of creative practices that use digital technologies to produce artworks. The artworks can be created using software and hardware tools, applying technical processes such as coding, 3D modelling, and digital drawing. Examples include computer, generative, robotic, kinetic, post internet, virtual reality and augmented reality art and works created on the internet. Digital art more commonly exists within digital mediums but can easily be translated to physical materials, programmed to be interactive or accessed remotely via a digital device (e.g. smartphone).

Digital design

The term refers to both design processes and products for which digital technology is an essential element. Digital design works across both the physical and the digital domain, and ranges from crafted and DIY products to mass-produced, commercial and industrial design solutions.

The definition of digital design is under constant revision as new technologies emerge, and commercial processes and global creative practices develop. Some examples of digital design include web design and mobile apps; user interface design; social media platforms; data visualisation; 3D models; animation; videogames, augmented and virtual reality; physical computing systems including mobile phones and smart devices; computer programming and software packages.

Emoji

Emoji, meaning 'picture character' in Japanese, are small pictograms used in text and online messaging. Predated by text-based emoticons, emojis were first introduced in Japan in 1997 and reached a wider audience in the late 2000s following their inclusion in the Apple iPhone operating system. In 2010, emojis were standardised by Unicode, the universal standard for consistent encoding of character-based electronic communication and by 2015, more that 90% of the world's online population were using emoji in their communications.

Together with GIFs and memes, emojis are common expressions of online supra languages – languages which are intuitive, expressive and simpler to grasp than longer spoken or written text.

See also: GIF; Meme

Generative art

Generative art and computer art were frequently used interchangeably in the 1960s. The first computer/digital art exhibition was titled Generative Computergraphik (Generative computer graphics), the show was organised by Max Bense at the Galerie Wendelin Niedlich, Stuttgart in 1965. The meaning of 'generative art' has evolved over the years, and now refers to pieces that use a set of rules to make an artwork. The artist creates a set of rules for the computer to interpret in order to generate the final output. Generative art is therefore a type of computer art, although not all computer art is generative.

Casey Reas is a generative artist who uses a process of rule-based coding to create a desired image. Reas acknowledges the importance of software, labelling it as a "natural medium" which can encompass "how the world operates".

See also: GIF; Emoji

GIF

GIF stands for Graphic Interchange Format. GIFs can be still images, but more popularly are used for short, looping animations. They are made using sequential images, so unlike videos, they do not have sound. The GIF format was developed in 1987 by Stephen Wilhite at CompuServe as a way to distribute "high-quality, high-resolution graphics" in colour.

In the 1990s, the GIF was one of two image formats commonly used on websites, and the only colour format. By the early 2000s, GIFs were widely used across personal web platforms such as Geocities. Their use for personal expression in online communications grew further with the rise of social media platforms in the 2010s. Typically containing recognisable images from pop culture, and sometimes accompanied by a short text, GIFs are now recognised as a universal language for conveying humour, sarcasm, and angst.

Although GIFs were quickly considered low-quality compared to other image formats, their continued popularity is in part because they are easy to make and distribute as they are supported across different browsers and websites. GIF-making sites, including GIPH which launched in 2013, contribute to the format's continued popularity by enabling users to search, make and share GIFs.

See also: Meme; Emoji

Hardware

The term 'hardware' refers to the physical components of a computer system. Hardware is typically combined with software to form a usable, interactive computing system. While computing hardware is essential for the running of software programmes, hardware-only computing systems also exist. For a personal computer, the hardware can include the desktop unit, the monitor and keyboard, as well as the internal elements, such as the circuit board and graphics card. Hardware has reduced in size considerably over time and personal electronic devices including smartwatches are also considered computing hardware.

Hardware can break over time when mechanical parts fail or due to chemical breakdown. Another mode of breakdown is planned obsolescence, where new generations of software and operating systems are incompatible with the hardware, and the existing software becomes unsupported, preventing the computer from functioning properly.

See also: Software; Programming; The internet of things

Interface

A person communicates with a computer via the interface, often with hardware. For example, on a desktop computer, the screen, keyboard, and mouse allow a user to input information to the computer through a user interface to create the desired result. The idea for the user interface was developed in the 1960s, with the first interface-based computers introduced in the 1970s.

For familiarity and ease of use, the interface was designed as a 'desktop', where the screen resembled the work desk, with folders of files and programmes arranged spatially on screen. Before this, computer interaction was complex and required specialist knowledge. Early examples of computer art, for instance, required lengthy input through code – with no real-time visualisation – and required a machine such as an ink plotter to execute visual output. In contrast, in the 21st century, interfaces are predominantly visual. Websites and apps are typically designed to be highly intuitive, to improve the user experience (UX), and to encourage the user to continue using the platform.

See also: Computer art

Internet of things

The internet connectivity of everyday items, which allows them to wirelessly collect and communicate data without human involvement, is referred to as the 'Internet of Things'. The first experiments were undertaken in the 1980s and 1990s, and the term 'Internet of Things' was coined in 1999. The concept took off in the 21st century, and now almost every object – from vending machines and traffic lights to fridges, doorbells and chairs – exists as an Internet of Things device.

Connected objects offer convenience and increased data visibility for users and can sometimes make devices more accessible for disabled people. However, they also carry a risk of data being misused or stolen or even – as in the case with the voice-activated Hello Barbie and the eRosary – being hacked.

Machine learning

In machine learning, training data is fed into an algorithm, which in turn changes and adapts based on the information it receives. While the initial algorithm is programmed, it can make predictions and decisions that are not programmed based on the data it is trained on, and the more data an algorithm receives, the 'smarter' it can become. Machine learning is part of the broader concept of artificial intelligence.

Popular examples are Amazon Echo, which uses machine learning to improve the performance of the voice assistant 'Alexa', whilst the Nest Thermostat uses machine learning to automatically regulate the temperature of a home by collecting data about the owner's living habits and schedule.

Machine learning has also been the subject of artistic commentary, in her work Words that remake the world. (Vision of the Seeker 2016-2018), Nye Thompson presents a diagram on how an AI system sees the world through images it is "fed".

See also: Artificial intelligence

Open-source

Open-source refers to computer hardware and software that is made available for the public to use, study, edit, and iterate. Sometimes the creator will hold the copyright and release it under an open-source licence that dictates certain conditions for use, such as that any derivative works must also remain open-source. At other times, open-source is created collaboratively from the outset.

Open-source software was commonplace in the 1950s and 1960s, at which point it was referred to as 'free software'. Universities and academics led and shared software developments by making the source code publicly available. However, with growing commercialisation in the 1960s, access to software became increasingly restricted. Interest in free software resurged in the 1980s and 1990s, particularly as access to the internet increased and online communities grew. At this point, the emphasis shifted from 'free' to 'open source' as a development methodology with an emphasis on community production, peer review and shared custodianship.

Meme

Though first gaining popularity in the 2000s, the term meme was created in the 1970s by Richard Dawkins in the book The Selfish Gene (1976). He understood a meme, coming from the Greek for the word 'imitated' (mimema), to carry information travelling amongst people and evolving as it is transferred. The term has grown culturally to describe a piece of digital content (whether image, video or otherwise) and text that evolves as it is shared. The combination of the two elements often leads to humour or satire, though not in all cases.

The most popular way of sharing memes is via the internet (an 'internet meme'), and, when shared widely, a meme is said to 'go viral'. Viral memes often consist of an image posted on the internet for a period of time, whether hours or years, that then becomes popular in an altered form. This can include adding and altering text, combining with another image, and removing or focusing on the background of the image.

NFT

NFT stands for non-fungible token. These tokens are commonly linked to digital assets including digital artworks, memes, and text. NFTs are unique pieces of data that can be hosted on some blockchains (see definition below), like Ethereum, or Tezos. These blockchains act as a 'digital ledger' creating a record of ownership.

Rather than the asset itself, an NFT is more like a contract of ownership, recording data including sales and trading information. As the name suggests, NFTs are not fungible, or, in other words, they are unique and not interchangeable. The technology that first made this possible was the ERC-721 protocol, a code written by Dieter Shirley for the Ethereum blockchain in 2018. Since then, NFTs have formed an important part of the digital art landscape.

While there has been excitement in the early 2020s about the potential for NFTs to disrupt art markets and better financially renumerate artists, they have also received criticism. This is often around the large carbon footprint that is associated with 'minting' (or creating) most NFTs. Questions too remain about how secure, and legally sound, NFTs are.

Some established artists working in the field of digital art have minted NFTs, while others have been drawn in from other professions. NFTs have also spurred interest in new kinds of collecting, including 'collectables' like Cryptokitties or Bored Apes.

See also: Blockchain; Digital art

Blockchain

The blockchain is a shared digital ledger of transactions. It can be used for cryptocurrencies, a form of digital currency, or for non-fungible tokens (NFTs), a kind of ownership of digital assets.

The first use of blockchain technology was for the cryptocurrency bitcoin in 2008, a digital currency created by Satoshi Nakamoto, an unidentified individual or group. Blockchain technology was essential for the development of cryptocurrency, since the secure record of transactions meant that no centralised regulatory body was required.

There have been artistic experiments with the blockchain, as well as off-chain artworks that comment on the blockchain, which pre-date the invention of NFTs in 2018. Since then, it has been possible to create NFTs on certain blockchains, making it an important technology for some digital creators.

See also: NFT

Computer programming

Computer programming is the process of writing instructions for how a computer system or application performs. These instructions, commonly called code, are written in programming languages.

Programmes have been used since the 1800s to direct the behaviour of machines, beginning with the use of punched cards to control musical boxes and the Jacquard Loom. In 1843, Ada Lovelace published a sequence of steps for Charles Babbage's unrealised computing machine, the Analytical Engine, making her the first computer programmer.

Today there are thousands of programming languages with different intended uses, and it is common for multiple languages to be combined to write an individual project. However, there are popular, general-purpose programming languages such as C++, JavaScript and Python. As programming languages are continually being developed, older languages become obsolete. Sometimes, this legacy code remains as an existing base that has been added to, where updating the original language is deemed too expensive or disruptive.

The oldest programming language still in use today is Fortran, released in 1957. Fortran is used in works including Dominic Boreham's IM21 (B5) P0.5 3.X.79. Early computer programmes were stored on punched cards, including Manfred Mohr's P032 (Matrix Elements). Written by the artist in Fortan, this programme instructed a plotter device to produce the drawing P-32 (Matrix Elements).

Software

Software is a set of instructions, data, and programmes used to execute specific tasks on computing devices including personal computers, tablets, smartphones, and smart devices. All software requires a hardware device to run, and without software most computing hardware would be non-operational.

Software is understood in two categories: application software and system software. Application software (such as word processing packages and web browsers) are discrete software packages that facilitate specific tasks. In contrast, system software (operating systems like macOS or Android) serves as the interface between such applications and the computing hardware. System software is vital to the running of application software.

Application software gained popularity with the arrival of personal computers in the 1970s and 80s. The VisiCalc electronic spreadsheet software is a key example. Launched in 1977 for use on the Apple II personal computer, it is now considered the first 'killer app' – a piece of application software that is deemed virtually indispensable and assures the success of a computing system. Following the release of the Apple iPhone in 2007, and subsequent smartphone developments, it became increasingly common for people to have ready access to and rely on application software for many daily tasks and activities such as messaging and diary management.

See also: Hardware; Programming

Source code

Source code refers to the human-readable version of a computer programme as it is originally written in a programming language before translation into a machine-readable object code. In short, source code is written and read by humans in alphanumerical characters (plain text). To be read and executed by a computer, source code must be translated into object code using a specific programme called a compiler. The object code (machine code or machine language) is binary, comprising only of 1s and 0s.

Artists who use programming to create artworks use a programming language to write source code. Source code also forms a part of all computer-enabled objects.

See also: Programming; Software

Virtual reality

Virtual reality (VR) refers to an immersive 3D visual environment constructed using computer graphics or 360-degree video. The environment is typically accessed via a head-mounted display headset, such as the Oculus Rift, but other forms exist such as CAVE VR, where projected images fill the walls of a physical room.

Virtual reality is typically interactive, and users can use a controller or gesture to move around the virtual environment. In contrast, 360-degree video uses a fixed perspective, so is more akin to an immersive version of a film. In both modes of VR, the user's physical orientation and gaze direction is tracked, and the image is constructed in real-time in response.

The conceptual development of VR can be seen in the first navigable virtual environment created in 1977 by David Em whilst artist-in-residence at NASA's Jet Propulsion Lab. Approach and Aku in the V&A collections document Em's early simulated worlds.

VR contrasts with augmented reality since it is purely virtual and does not incorporate physical space or objects. A user of the Oculus Rift headset will be entirely cut off from visuals of the physical space they are in.

See also: Augmented reality